Delta Hedging & Volatility Trading Simulator

GitHubTrading Problem

Short volatility exposure requires managing gamma risk under discrete hedging conditions, where replication is imperfect.

Core Idea

A short option position is dynamically delta-hedged using Black-Scholes Greeks to approximate continuous replication.

The simulation focuses on how hedging frequency and volatility estimation impact PnL outcomes.

The simulation focuses on how hedging frequency and volatility estimation impact PnL outcomes.

Strategy (Short Volatility Exposure)

The position reflects standard short vol exposures:

- Short gamma → losses from large directional moves

- Short vega → sensitivity to volatility changes

- Long theta → time decay benefit

PnL is driven by the interaction between:

- Spot movement

- Gamma exposure

- Discrete hedging adjustments

- Short gamma → losses from large directional moves

- Short vega → sensitivity to volatility changes

- Long theta → time decay benefit

PnL is driven by the interaction between:

- Spot movement

- Gamma exposure

- Discrete hedging adjustments

Hedging Mechanics

At each time step:

- Compute option delta (Black-Scholes-Merton)

- Rebalance underlying position to maintain delta neutrality

- Track cash + financing cost

- Update portfolio value under self-financing constraint

- Compute option delta (Black-Scholes-Merton)

- Rebalance underlying position to maintain delta neutrality

- Track cash + financing cost

- Update portfolio value under self-financing constraint

Trading Mapping

This replicates:

- Dynamic hedging of short option exposure

- Real-world gamma risk under imperfect replication

- Volatility mispricing impact on PnL

- Execution friction from discrete rebalancing

- Dynamic hedging of short option exposure

- Real-world gamma risk under imperfect replication

- Volatility mispricing impact on PnL

- Execution friction from discrete rebalancing

Key Insights

- Discrete hedging creates unavoidable replication error

- Gamma dominates PnL near expiry and during large moves

- Volatility mis-specification is the main driver of PnL deviation

- Option PnL is path-dependent rather than mark-to-model static

- Gamma dominates PnL near expiry and during large moves

- Volatility mis-specification is the main driver of PnL deviation

- Option PnL is path-dependent rather than mark-to-model static

Core Takeaway

Delta hedging reduces directional exposure, but does not eliminate volatility-driven PnL risk under discrete execution.

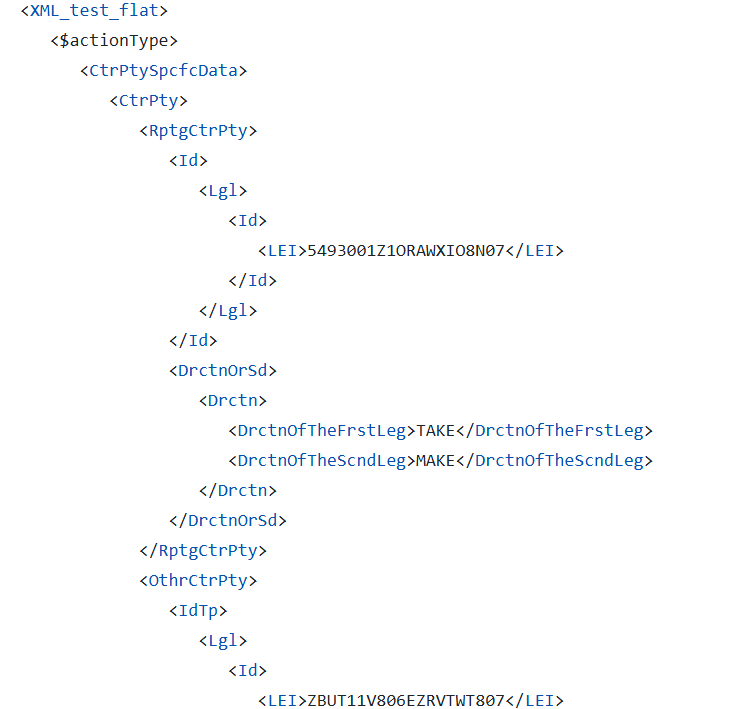

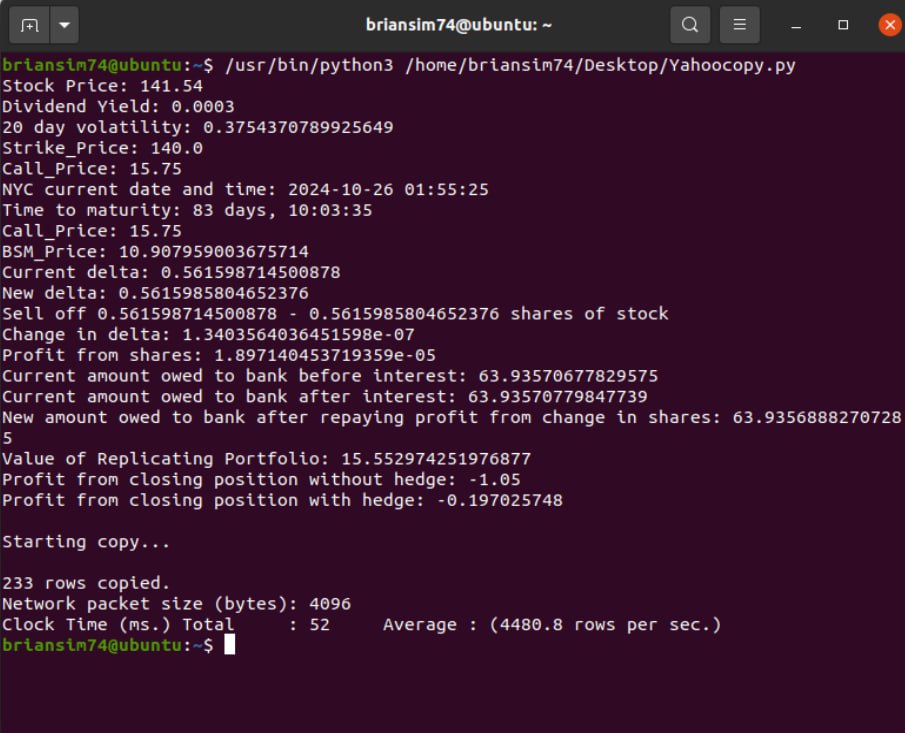

Real-Time Delta Hedging Simulation

Real-time simulation of portfolio rebalancing, gamma exposure, and hedging error under non-continuous execution constraints.

Live Portfolio Evolution under Dynamic Delta Rebalancing

Automated hedging system updating portfolio state in real time, including delta recalculation, underlying adjustments, and PnL evolution under discrete execution intervals.

.gif)

.gif)